The Internet of things and hyper connectivity will fundamentally disrupt traditional security safeguards.

Thanks to our increasingly hyper connected world, I believe that simply having a firewall to protect against your external enemies and threats will become a thing of the past. A security infrastructure that require data to traverse it to do its job will no longer be enough. In fact, the terms “dirty-side” and “clean-side” currently being used to describe network interfaces will have no meaning. Tomorrow, threats will come from what seem like unlikely and trusted sources. It’s going to be second and third connected business partners that you will have to worry about. Someone that is once or twice removed from your infrastructure being hacked makes you just as vulnerable as a nefarious internal actor trying to compromise your data.

There used to be only so many ways one could gain entry to a system, but now with the explosion of devices and access points, these traditional defences are simply not going to work anymore. The cleanly delineated view of your network being secure through the use of a firewall separating trusted and untrusted traffic will be antiquated, and instead security will be better ensured by viewing the network much more holistically as well as having technology safeguards in place that monitor the behaviour of users and handle anomaly detection.

On a related subject is the actual handling of such anomalies. If they emanate from sources that are indirectly connected to your infrastructure, like the business partners’ example mentioned above, then blocking such activity would be the easiest action.

Equally the “internal actor” who is making a deliberate attempt to breach security for malicious purposes, can be detected and confined by next generation safeguards that react to unusual behaviour of user, device, location and application as a combination rather than simply as separate elements.

But what about those innocent users who compromise data without knowing? These are staff who evolved and enhanced their working environment by embracing shadow IT. Security safeguards have to learn to recognize and respond to seemingly normal data movement and collaboration actions made by employees who put the company’s data at risk. Examples of this could be the use of a personal account in a cloud storage service like Dropbox, on-line translation tools, or even sending attachments via a personal email account.

2018 will see a marked increase in adoption of Data Loss Prevention (DLP) and Cloud Access Security Broker (CASB) products in combination with the holistic visibility tools.

Prediction: Network security will ultimately be driven by machine learning and artificial intelligence.

Machine learning (ML) and artificial intelligence (AI) technologies at the security layer are going to be extremely dependable sentinels. Unlike todays network security systems which are largely human administered and maintained, ML and AI will be constantly vigilant against threats and vulnerabilities and will allow us to use the “P” (prevention) in IPS with confidence. The current thinking as a security professional is that if you have an updated database, secure firewall, patched OpenSSL, etc., you’re secure – but this presents a false sense of confidence that can be fatal to the security of the network. Machine learning and AI technology don’t suffer from over confidence and preconceived notions of security. It will simply do the job of identifying anomalies and mitigating threats, but far faster and better than today’s, largely human latency bound, security posture model.

Two of the key barriers to the otherwise inevitable adoption of such technology will be; the need to have the appropriate legislation and accreditation in place for what could be otherwise described as a form of autonomous security, and the “human factor”.

Recognised legal frameworks need to be in place to ensure organisations can demonstrate compliance to a standard. In the case of the human factor, this is the reluctance to hand over control of something that has been in our “manually managed and monitored domain” for so long. History shows it took a while for IT teams to adopt the concepts of virtualization on a data centre scale, likewise for example, it will take a while for car drivers to take their hands of the wheel, yet these days as passengers in commercial airliners we think nothing of the planes landing themselves.

Prediction: In the near-term, crowdsourcing will be used more aggressively by IaaS providers as a means of improving their security.

The crowdsourcing model works in regard to security because history has shown that the more eyeballs you have on a problem, the faster vulnerabilities will be found. WEP is exhibit A of this model, which was the initial encryption standard which was released as part of the first wireless networking standard. It was found to be riddled with vulnerabilities out the door because it was developed in a closed environment with no inputs from a broader base of people with an interest in identifying and shoring up any weaknesses.

The lesson was learned from this example and these standards are now open for broader analysis. Bounty programs at Microsoft, Oracle and others also prove this out. Why? Because they ask for help from many people, numbering in the hundreds and more, who are motivated to find bugs or vulnerabilities in their products and make them better and more secure.

Alternatively, if you develop in a silo, your defence against vulnerabilities is only as good as the 5, 10, 20 or so people that work on particular protocols and the one thing the teams miss will lead to vulnerabilities. If you have hundreds or more people working on these problems, then chances of finding and securing vulnerabilities goes up dramatically. Therefore, as counterintuitive as it may seem, the more open you are, the more protected you can be. As more and more companies adopt these bug, or vulnerability bounty programs, this crowdsourcing security model will prove to be one of the most efficient, economical and effective strategies for shoring up the security of the network as well as it has for software and browsers.

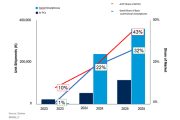

Prediction: Emerging technologies such as augmented and virtual reality, as well as IoT, will drive the need for scaled and automated network management.

These emerging technologies are in the mainstream future of IT. While virtual reality (VR) was once only associated with gaming, now VR as well as augmented reality (AR) are being rapidly being adopted in industries such as manufacturing, healthcare, transportation, energy – the list goes on and on. Add to this phenomenon the explosion in IoT devices at the edge of the network – which are generating data at an incredible rate – and you can see that the job of network management and ensuring network performance can quickly consume an organization that does not invest to scale through technology, process automation or partnering with a provider that does. Introducing these new emerging technologies into the workplace requires the ability to isolate their activity for visibility and understand how when scaled they will impact the network and distribution of compute workload at the edge.

To be able to deploy them responsibly requires a solid foundation of enabling IT services – LAN, WAN, branch and DC computing. Modernizing the network with next generation software defined network solutions and services will be key. IT organizations will need to make decisions on sourcing – in-house managed or through a managed service provider – in order to deliver and manage the solution set needed to keep up and stay ahead. Managed network service providers will increase their capabilities in order to take on this responsibility and allow the enterprise to focus on introducing these emerging technologies to enable greater innovation and a competitive edge.