The unspoken truth for IT executives and leaderships wanting to demise businesses’ unstructured data has been extensively discussed. Other than unstructured data, IT personnel need to take into account that the backup tasks that are frequently performed by copying and storing large data sets often leads to duplicated data, which in the long run would quickly lead to exponential data storage needs.

According to Gartner, by 2024, enterprises will triple their unstructured data stored as file or object storage from what they have in 2019 and 40% of I&O leaders will implement at least one of the hybrid cloud storage architectures.

And of course, the staggering amount of data that has been generated by businesses doesn’t come cheap. Gartner’s 2017 Data Quality Market Survey revealed that duplicated and poor data quality is costing organisations up to $15 million on average. At the macro level, bad data has estimated to cost the US more than $3 trillion per year. In other words, bad data is bad for business. And thus, a critical driver to a company’s business and data continuities is to not only have the IT managers to understand the differences between various deduplication methods, but also implement an agile yet cost-efficient data management platform that provides data deduplication capabilities.

The truth about deduplication rates

One of the most commonly utilised deduplication method is global deduplication. However, the effectiveness of data deduplication rate and ratios, and cost efficiency are sometimes difficult to compare and measure without a universal standard. Hence, several assumptions need to be made so we may ultimately put things into perspective when comparing global deduplication to others.

Often when some vendors claim they offer data deduplication features, it is revealed that the deduplication do not deduplicate data between each task, not to mention devices. Thus, if a single full backup occupied 100GB of the storage space, the deduplication task will only be performed on each backup task. If backup is performed 10 times, the total of 1TB storage space will eventually be taken. Moreover, there have been cases of per task deduplication ratio being calculated by using total disk size from the source side, thus giving an illusion of higher deduplication rate.

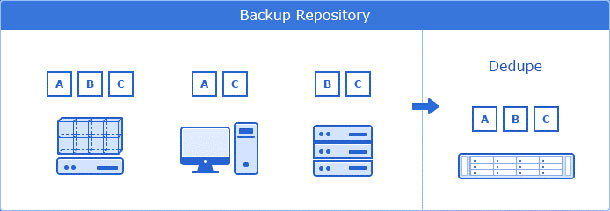

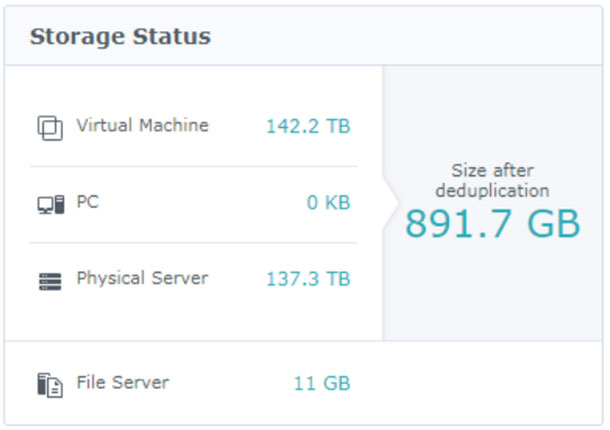

Synology’s Active Backup for Business provides built-in deduplication technology to greatly enhance data storage efficiency.

Methods that’s more accurate and serves businesses better

On the other hand, with global deduplication method, the backup task is performed across all physical and virtual environments. By using the same figure as above, with a single full backup file occupying 100GB, if perform together with incremental backup, the total storage space needed, in addition to the original full backup, would only contain files which have been altered. “When performing global deduplication on such backup strategy, global deduplication ratio calculated by solutions like Synology’s Active Backup for Business is often achieved using used disk size from the source side, thus giving a more accurate projection of the deduplication efficiency.

The two mainstream methods, per job deduplication and global deduplication, have long been debated over which methods serve businesses better. To put things in perspective, we should look at how the value of deduplication is measured between the two methods, which are the effectiveness of data reduction capabilities and maximum achievable deduplication ratios and overall impact on storage infrastructure cost and other data centre resources and functions, such as network bandwidth, data replication, and disaster recovery requirements.

Implement a single solution that is already software-hardware integrated

Last but not least, the total cost ownership of incorporating the servers with different backup and deduplication services, along with their incremental license and subscription fee, may pose both compatibility and financial challenges for IT managers. The simplest and the most cost efficient way is to implement a single solution that is already software-hardware integrated. Vendors like Synology offers built-in Active Backup for Businesses that requires no license fee, ultimately lowers the storage cost per TB, relieving IT personnel from having to choose between financial burden and performances.

Global deduplication reduces the storage consumption across versions, devices and platforms.

Partner with Synology here.

By Hewitt Lee, Director of Synology Product Management Team.